Apache Airflow ETL in Google Cloud

Are you thinking about running Apache Airflow on Google Cloud? That’s a popular choice for running a complex set of tasks, such as Extract, Transform, and Load (ETL) or data analytics pipelines. Apache Airflow uses a Directed Acyclic Graph (DAG) to order and relate multiple tasks for your workflows, including setting a schedule to run the desired task at a set time, providing a powerful way to perform scheduling and dependency graphing.

So what are the different ways to run Apache Airflow on Google Cloud? The wrong choice could reduce availability or increase costs — the infrastructure could fail, or you may need to create many environments, such as dev, staging, and prod. In this post, we’ll look at three ways to run Apache Airflow on Google Cloud and discuss the pros and cons of each approach. For each approach, Google Cloud provide Terraform code that you can find on GitHub, so you can try it out for yourself.

Note: The Terraform used in this article has a directory structure. The files under modules are no different in format than the default code provided by Terraform. If you’re a developer, think of the modules directory as a kind of library. The main.tf file is where the actual business code goes. Imagine you’re doing development: start with main.tf and put the code we use in common in directories like modules, library, etc.)

Let’s look at our three ways to run Apache Airflow

1: Compute Engine

A common way to run Airflow on Google Cloud is to install and run Airflow directly on a Compute Engine VM instance. The advantages of this approach:

- it’s cheaper than the others

- it only requires an understanding of virtual machines.

On the other hand, there are also disadvantages:

- You have to maintain the virtual machine.

- It’s less available.

The disadvantages can be substantial, but if you’re thinking about adopting Airflow, you can use Compute Engine to do a quick proof of concept.

First, create a Compute Engine instance with the following terraform code (for brevity, some of the code has been omitted). The allow is a firewall setting. 8080 is the default port used by Airflow web, so it should be open. Feel free to change the other settings.

# main.tf

module "gcp_compute_engine" {

source = "./modules/google_compute_engine"

service_name = local.service_name

region = local.region

zone = local.zone

machine_type = "e2-standard-4"

allow = {

...

2 = {

protocol = "tcp"

ports = ["22", "8080"]

}

}

}

In the google_compute_engine directory, which we call as source in main.tf above, we have the following files and code that takes the values we passed in earlier and actually creates an instance for us — notice how it takes in the machine_type.

# modules/google_compute_engine/google_compute_instance.tf

resource "google_compute_instance" "default" {

name = var.service_name

machine_type = var.machine_type

zone = var.zone

...

}

Run the code you wrote above with Terraform:

$ terraform apply

Wait for a few moments and an instance will be created on Compute Engine. Next, you’ll need to connect to the instance and install Airflow — see the official documentation for instructions. Once installed, run Airflow.

You can now access Airflow through your browser! If you plan to run Airflow on Compute Engine, you’ll need to be extra careful with your firewall settings. Even if the password is compromised, only authorized users should be able to access it. Since this is a demo, we’ve made it accessible with minimal firewall settings.

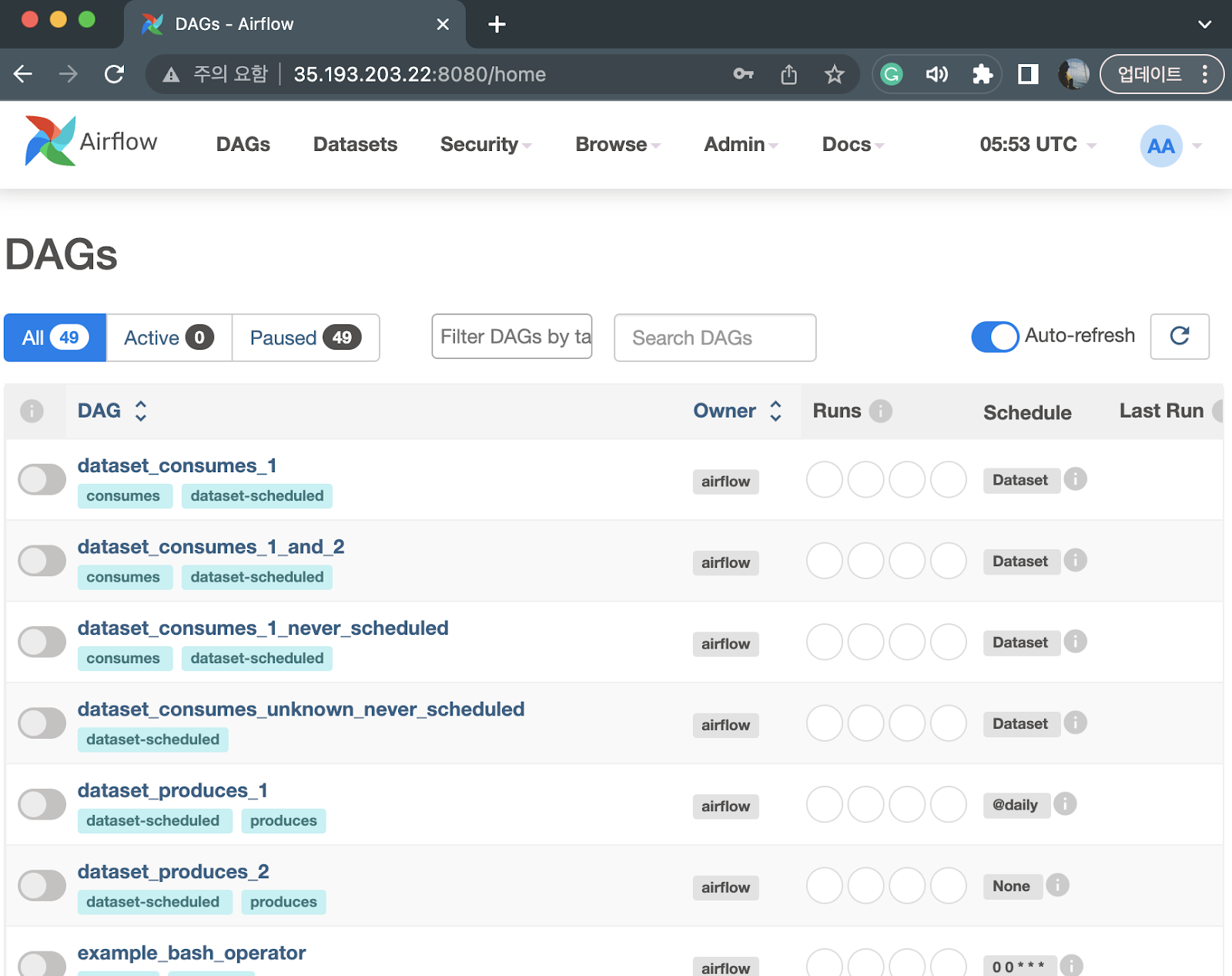

After logging in, you should see a screen like the one below. You’ll also see a sample DAG provided by Airflow. Take a look around the screen.

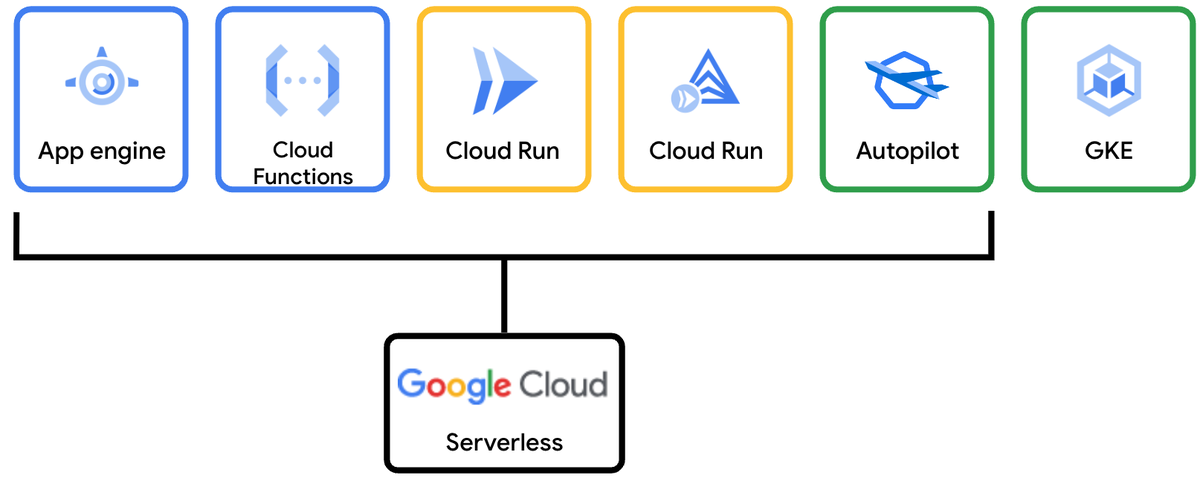

2: GKE Autopilot

The second way to run Apache Airflow on Google Cloud is with Kubernetes, made very easy with Google Kubernetes Engine (GKE), Google’s managed Kubernetes service. You can also use GKE Autopilot mode of operation, which will help you avoid running out of compute resources and automatically scale your cluster based on your needs. GKE Autopilot is serverless, so you don’t have to manage your own Kubernetes nodes.

GKE Autopilot offers high availability and scalability. You can also leverage the powerful Kubernetes ecosystem. For example, you can use the kubectl command for fine-grained control of workloads and monitor them alongside other business services in your cluster. However, if you’re not very familiar with Kubernetes knowledge, you may end up spending a lot of time managing Kubernetes instead of focusing on Airflow with this approach.

All right, so we’re going to create a GKE Autopilot cluster first. The Terraform module does the minimal setup for us:

# main.tf

module "google_kubernetes_engine" {

source = "./modules/google_kubernetes_engine"

project_id = var.project_id

service_name = local.service_name

region = local.region

network_id = module.google_compute_engine.network_id

}

The modules/google_kubernetes_engine.tf file is organized like below. Note that the enable_autopilot setting is True, and there is code for creating networks. You can check out the full code on GitHub.

# modules/google_kubernetes_engine.tf

resource "google_container_cluster" "this" {

project = var.project_id

name = "${var.service_name}-gke-cluster"

location = var.region

enable_autopilot = true

network = var.google_compute_network_id

ip_allocation_policy {}

}

Wow, we’re done already. Next, run the generated code to create a GKE Autopilot cluster:

$ terraform apply

Next, you’ll need to configure cluster access so that you can check the status of GKE Autopilot using the kubectl command. Please refer to the official documentation link for the relevant configuration.

Now deploy Airflow via Helm to the created GKE Autopilot cluster:

# helm_main.tf

resource "helm_release" "airflow" {

name = "airflow"

repository = "https://airflow.apache.org"

chart = "airflow"

version = "1.9.0"

namespace = "airflow"

create_namespace = true

wait = false

depends_on = [

module.google_kubernetes_engine

]

}

Deploy it again via Terraform:

$ terraform apploy

Now, if you run the kubectl command, you should see something similar to the following:

$ kubectl get pods -n airflow

NAME READY STATUS RESTARTS AGE

airflow-postgresql-0 1/1 Running 0 25m

airflow-redis-0 1/1 Running 0 25m

airflow-scheduler-tvqgq 2/2 Running 0 18m

airflow-statsd-ph5p6 1/1 Running 0 25m

airflow-triggerer-r5q2h 2/2 Running 0 25m

airflow-webserver-lc6gj 1/1 Running 0 25m

airflow-worker-0 2/2 Running 0 25m

Once you’ve verified that your pods are up and running, port-forward them to Airflow web access:

$ kubectl port-forward svc/airflow-webserver -n airflow 8080

Forwarding from 127.0.0.1:8080 -> 8080

Forwarding from [::1]:8080 -> 8080

Now try connecting to localhost:8080 in your browser.

If you want to customize the Airflow settings, you’ll need to modify the Helm chart. You can do this by downloading and managing the Airflow manifests.yaml file. You can set the values through the values setting as shown below. Make sure you have variables like repo, branch set in the yaml file:

# helm_main.tf

resource "helm_release" "airflow" {

name = "airflow"

repository = "https://airflow.apache.org"

chart = "airflow"

version = "1.9.0"

namespace = "airflow"

create_namespace = true

wait = false

values = [templatefile("../manifests/airflow/values.yaml", {

repo = "git@github.com:jybaek/example.git"

branch = "main"

})]

}

3: Cloud Composer

The third way is to use Cloud Composer, a fully managed data workflow orchestration service on Google Cloud. As a managed service, Cloud Composer makes it really simple to run Airflow, so you don’t have to worry about the infrastructure on which Airflow runs. Itpresents fewer options, however. For example, an uncommon situation is that you cannot share storage between DAGs. You may also need to ensure you balance CPU and memory usage as you have less ability to customize those options.

Take a look at the code below:

# main.tf

module "google_cloud_composer" {

source = "./modules/google_cloud_composer"

environment_size = "ENVIRONMENT_SIZE_SMALL"

network_id = module.google_compute_engine.network_id

subnetwork_id = module.google_compute_engine.subnetwork_id

service_account = module.gcp.service_account_name

project_id = var.project_id

region = local.region

service_name = local.service_name

}

If you look at the file stored under modules directory you’ll notice that: environment_size is being taken over and used.

# modules/google_cloud_composer/google_composer_environment.tf

resource "google_composer_environment" "this" {

...

config {

software_config {

image_version = "composer-2-airflow-2"

}

environment_size = var.environment_size

node_config {

network = var.google_compute_network_id

subnetwork = var.google_compute_subnetwork_id

service_account = var.google_service_account_name

}

}

}

As a side note, you can also preset valid values when passing in a value, by putting a condition in the validation, as shown below:

# modules/google_cloud_composer/variables.tf

variable "environment_size" {

description = "environment_size"

type = string

validation {

condition = contains(["ENVIRONMENT_SIZE_SMALL", "ENVIRONMENT_SIZE_MEDIUM", "ENVIRONMENT_SIZE_LARGE"], var.environment_size)

error_message = "Invalid value"

}

}

Note that Cloud Composer also supports Custom mode, which is different from other cloud service providers’ managed Airflow services. In addition to specifying standard environments such as ENVIRONMENT_SIZE_SMALL, ENVIRONMENT_SIZE_MEDIUM, and ENVIRONMENT_SIZE_LARGE, you can also control CPU and memory directly.

Now, let’s deploy to Terraform:

$ terraform apply

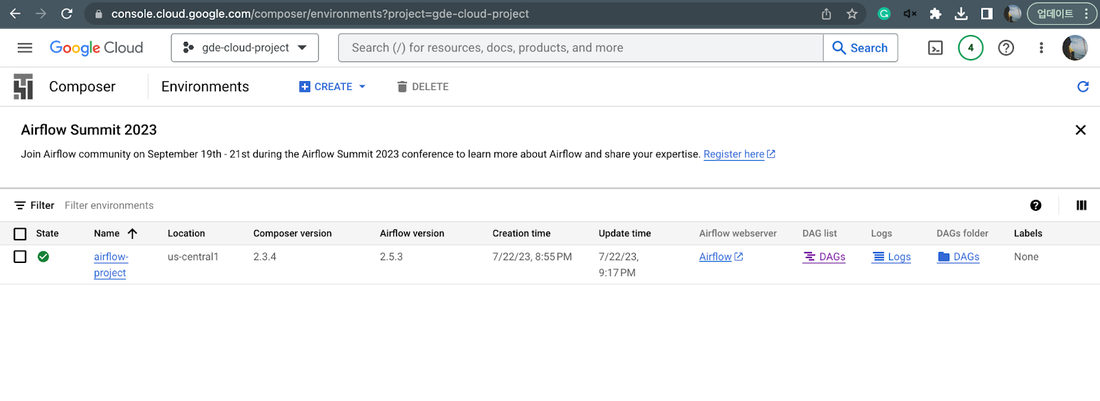

Now, if you go to the Google Cloud console and look in the Composer menu, you should see the resource you just created:

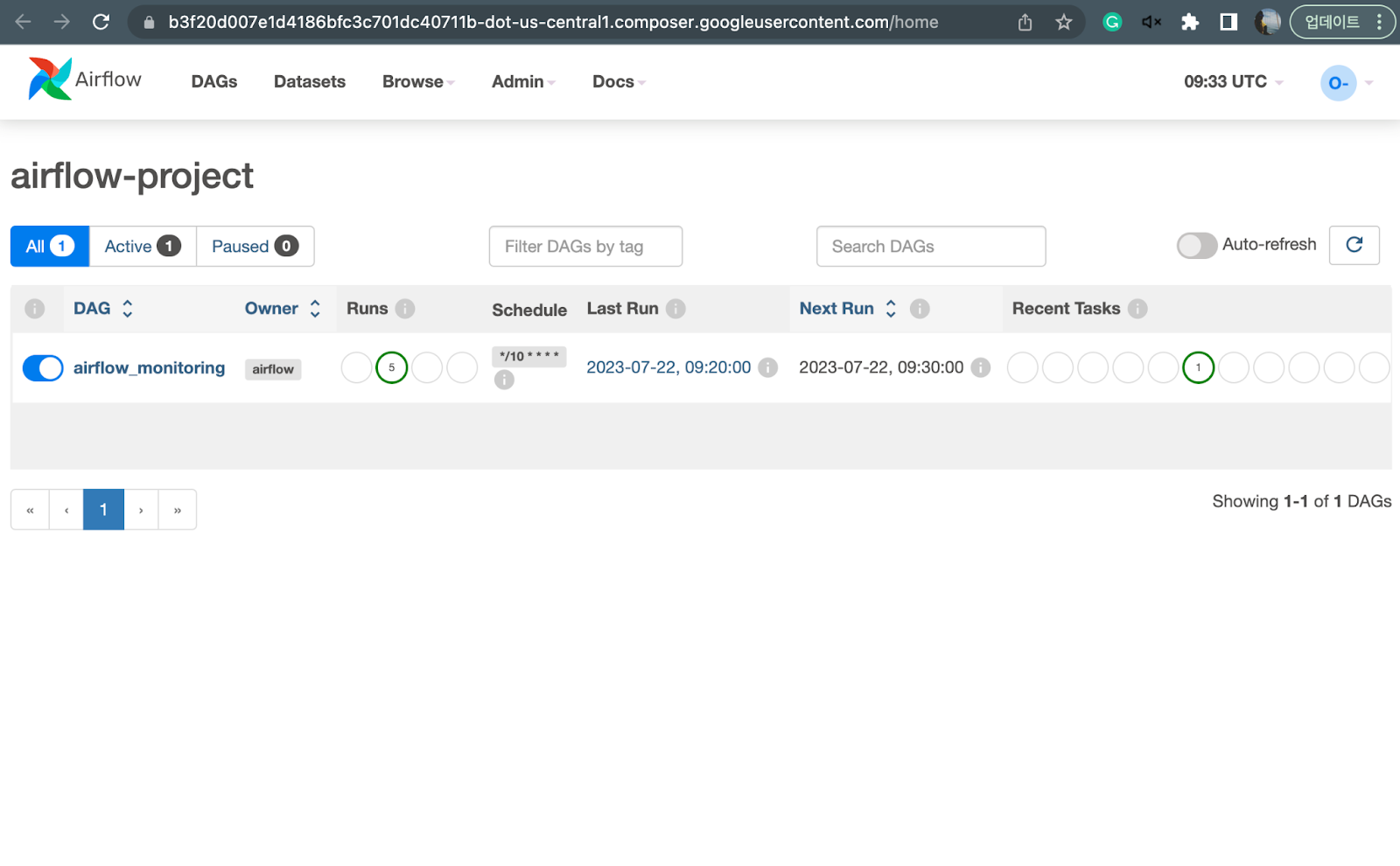

Finally, let’s connect to Airflow by clicking the link to the Airflow webserver entry above. If you have the correct IAM permissions, you should see something like the screen below:

Wrap up

If you’re going to run Airflow in production, there are three things you need to think about: cost, performance, and availability. In this article, Google Cloud have discussed three different ways to run Apache Airflow on Google Cloud, each with its own personality, pros and cons.

Note that these are the minimum criteria for choosing an Airflow environment. If you’re running a side project on Airflow, coding in Python to create a DAG may be sufficient. However, if you want to run Airflow in production, you’ll also need to properly configure Airflow Core (Concurrency, parallelism, SQL Pool size, etc.), Executor (LocalExecutor, CeleryExecutor, KubernetesExecutor, …), and so on.