Ask your documents: Document AI and PaLM2 for question answering

Documents, physical or digital, contain a goldmine of information—assuming all that data and content can actually be leveraged to help employees to do their jobs. Internal IT and content management teams have long sought to provide knowledge workers with the ability to interact with a document — or better yet, a corpus of documents — without needing to manually dig through them.

This goal went unfulfilled for years because, prior to generative AI models such as PaLM 2, technologies struggled to provide the contextual understanding required to perform question-and-answering across different document types. Today, however, developers can build an “Ask your documents” tool for employees by leveraging Google Cloud Document AI, text embedding models, and PaLM 2. In this post, Google will show you how.

Why use Document AI and PaLM2 to build a document Q&A application

Document Question-Answering (Document Q&A) involves extracting information from a given document to answer questions in natural language. The use cases applicable to this type of workflow cover a wide variety of industries and domains. For example:

- Lawyers and legal professionals can use Document Q&A to search through legal documents, statutes, and case law to find relevant information and precedents for their cases.

- Students and educators can benefit from Document Q&A to better understand concepts in research papers, textbooks, and educational materials.

- IT support teams can employ Document Q&A to help resolve technical issues by quickly finding information from technical documentation and troubleshooting guides.

A retrieval Augmented Generation (RAG) can help you to generate more accurate and informative answers to questions by grounding responses in relevant information from a knowledge base, such as a vector store. For this task, Document AI OCR (optical character recognition) and PaLM provide powerful capabilities.

The solution and architecture proposed in this blog create a serverless and scalable framework for implementing a RAG-based architecture at scale. Here, we’ll focus on Q&A use cases for long documents.

High-level architecture

For the purpose of this post, Google used Document AI, which provides high-quality, enterprise-ready AI document processing models. It’s a fully managed, scalable, and serverless solution capable of processing millions of documents without needing to spin up infrastructure.

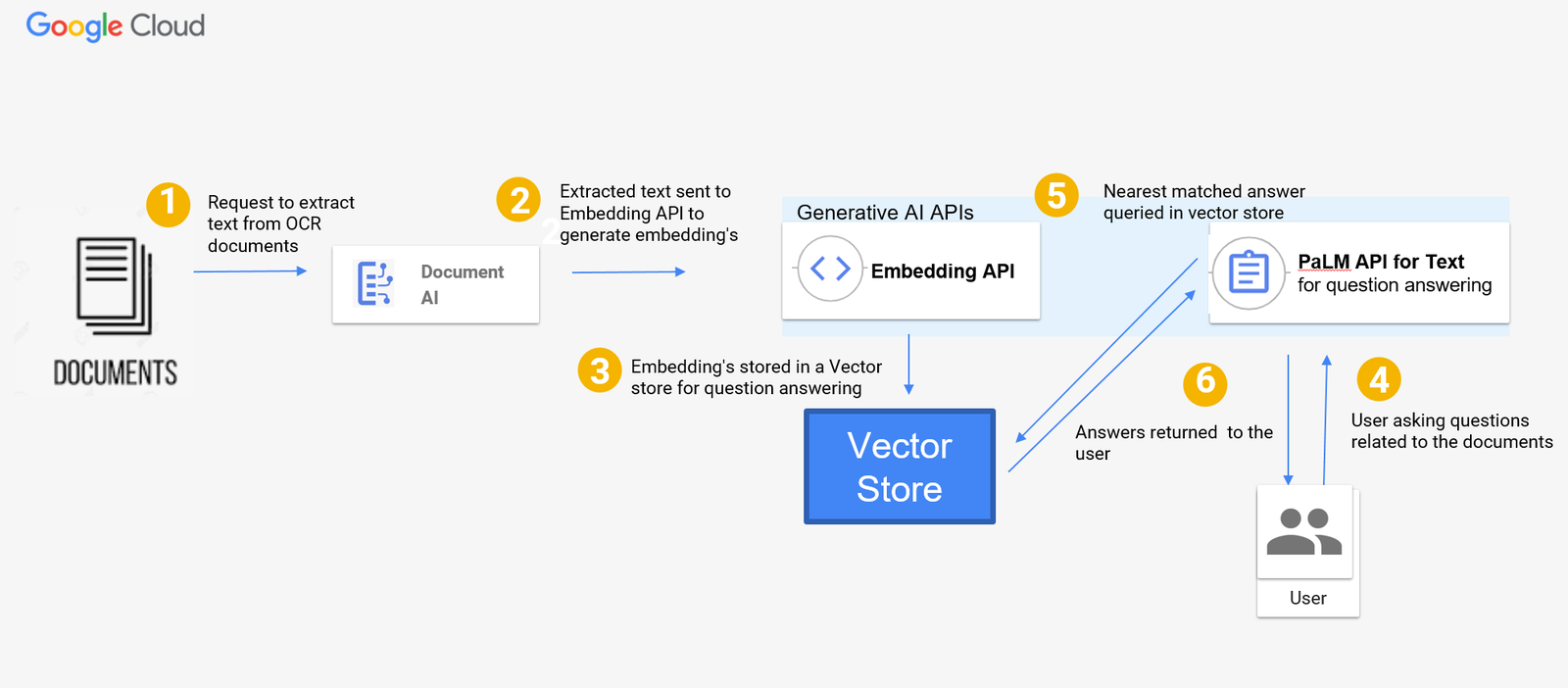

More specifically, Google used Enterprise Document OCR, a pre-trained model that extracts text and layout information from document files. We also used the textembedding-gecko model from Vertex AI to create a text embedding — a vector representation of text — with generative AI. Lastly, we leveraged PaLM2, specifically the Vertex AI text-bison foundation model, to answer questions on the embedding data store. Below is a diagram of the serverless architecture for Document Q&A with Document AI and PaLM2 generative AI foundation models:

The flow in the architecture diagram for the Q&A tool can be described as follows:

- Documents, such as scanned PDFs or images, are sent to Document AI for OCR processing and text extraction.

- The extracted text is sent to the textembedding-gecko model to generate embeddings.

- Embeddings can be stored in any vector store. In this blog, we didn’t use a vector store and instead stored the vectors in a data structure to show you what the implementation is for a small set of documents. However, in order to scale the solution, you can use vector search, a petabyte-scale vector store to store the embeddings.

- A user asks questions related to the documents.

- The PaLM text-bison model searches the vector store and outputs the answer using the most similar embeddings in the vector store.

- The response is returned to the user.

Implementation

Now that Google have explored the architecture, we’ll outline the high-level steps required to build a Q&A tool so that you can architect a Question-Answering application using Vertex AI SDKs.

You can follow along using this notebook, which provides additional step-by-step details for implementation.

Getting Started

For our example, Google used Alphabet earnings reports. You can find the PDFs hosted in this public Google Cloud Storage bucket:

# Copying the files from the GCS bucket to local storage

!gsutil -m cp -r gs://github-repo/documents/docai

Step 1: Create a Document AI OCR processor

A Document AI processor is an interface between a document file and a machine learning model that performs document processing actions. Processors can be used to classify, split, parse, or analyze a document. Each Google Cloud project needs to create its own processor instances.

The Document AI processor takes a PDF or image file as input and outputs the data in the Document format. We used the Python Client for Document AI library to create an Enterprise Document OCR processor and then called this processor method to process documents.

def create_processor(

project_id: str, location: str, processor_display_name: str

) -> documentai.Processor:

client = documentai.DocumentProcessorServiceClient(client_options=client_options)

# The full resource name of the location

# e.g.: projects/project_id/locations/location

parent = client.common_location_path(project_id, location)

# Create a processor

return client.create_processor(

parent=parent,

processor=documentai.Processor(

display_name=processor_display_name, type_="OCR_PROCESSOR"

),

)

try:

processor = create_processor(project_id, location, processor_display_name)

print(f"Created Processor {processor.name}")

except AlreadyExists as e:

print(

f"Processor already exists, change the processor name and rerun this code. {e.message}"

)

Step 2: Process the documents

With the Enterprise Document OCR processor you just created, you can start processing documents. To process documents, provide the processor name and file path as input to invoke the document processing function below:

def process_document(

processor_name: str,

file_path: str,

) -> documentai.Document:

client = documentai.DocumentProcessorServiceClient(client_options=client_options)

# Read the file into memory

with open(file_path, "rb") as image:

image_content = image.read()

# Load Binary Data into Document AI RawDocument Object

raw_document = documentai.RawDocument(

content=image_content, mime_type="application/pdf"

)

# Configure the process request

request = documentai.ProcessRequest(name=processor_name, raw_document=raw_document)

result = client.process_document(request=request)

return result.document

Step 3: Create data chunks

For some tasks, solutions like Vertex AI Search let you simply select a corpus of documents – but for the most flexibility, a bespoke approach may help your company to balance cost, complexity, and accuracy. For these custom implementations, LLMs produce the best results when a document’s text is broken up into small ‘chunks’ before being added to the prompt. Chunking is a technique used to break a document into smaller chunks that are easier to process. This can be done by dividing the document into sentences, paragraphs, or even sections.

The current token size limit for the PaLM2 text-bison@02 model is 8,196 tokens. This means that a single request to the PaLM API can only process a document that is up to 8,196 tokens long. If the document is longer than this, it will need to be broken up into smaller chunks. You can also use PaLM2 32K, which is in preview and can handle up to 32,000 tokens as input.

Note: If you already use PaLM2 32K text-bison models, you can skip the following step.

# If you already have a Document AI Processor in your project, assign the full processor resource name here.

processor_name = processor.name

chunk_size = 5000

extracted_data: List[Dict] = []

# Loop through each PDF file in the "docai" directory.

for path in glob.glob("docai/*.pdf"):

# Extract the file name and type from the path.

file_name, file_type = os.path.splitext(path)

print(f"Processing {file_name}")

# Process the document.

document = process_document(processor_name, file_path=path)

if document:

# Split the text into chunks of the specified size.

document_chunks = textwrap.wrap(text=document.text, width=chunk_size)

# Loop through each chunk and create a dictionary with metadata and content.

for chunk_number, chunk_content in enumerate(document_chunks, start=1):

# Append the chunk information to the extracted_data list.

extracted_data.append(

{

"file_name": file_name,

"file_type": file_type,

"chunk_number": chunk_number,

"content": chunk_content,

}

)

Step 4: Import models

Now, use the PaLM2 text-bison and gecko-embedding models from the Vertex AI SDK for python to perform the embeddings for the next step. They will also be used in Step 6 to do question answering.

generation_model = TextGenerationModel.from_pretrained("text-bison@001")

embedding_model = TextEmbeddingModel.from_pretrained("textembedding-gecko@001")

Step 5: Getting the embeddings for each chunk

With the chunking you did in step 3, you can start the implementation by simply calling the embeddings for each chunk using the Embeddings API. If you did not do chunking in Step 3, you can use the Embeddings API to generate embeddings.

The code below adds the embeddings (vector/number representation) of each chunk as a separate column.

pdf_data_sample["embedding"] = pdf_data_sample["chunks"].apply(

lambda x: embedding_model_with_backoff([x])

)

pdf_data_sample["embedding"] = pdf_data_sample.embedding.apply(np.array)

pdf_data_sample.head(2)

The function below returns custom relevant context for questions you ask. You can call it for every new question. This code searches the vector store where embeddings are stored and finds the most relevant answer.

def get_context_from_question(

question: str, vector_store: pd.DataFrame, sort_index_value: int = 2

) -> Tuple[str, pd.DataFrame]:

query_vector = np.array(embedding_model_with_backoff([question]))

vector_store["dot_product"] = vector_store["embedding"].apply(

lambda row: np.dot(row, query_vector)

)

top_matched = vector_store.sort_values(by="dot_product", ascending=False)[

:sort_index_value

].index

top_matched_df = vector_store.loc[top_matched, ["file_name", "chunks"]]

context = "\n".join(top_matched_df["chunks"].values)

return context, top_matched_df

Step 6 Calling PaLM text generation APIs to ask questions

Next, let’s cover how to use the PaLM text-bison APIs with the get_context_from_question (question, vector store, sort_index_value) function you created in the previous step to get answers from the vector store.

Using the function below, pass the top N results from the vector embedding search to the PaLM2 text-bison model as a prompt to get answers to your question. The top N data is picked by the model based on user questions and used as the context that is passed to the prompt for the generative AI model.

# your question for the documents

question = "When did google become carbon neutral?"

# get the custom relevant chunks from all the chunks in the vector store.

context, top_matched_df = get_context_from_question(

question,

vector_store=pdf_data_sample,

sort_index_value=2, # Top N results to pick from embedding vector search

)

Next, use the code below to create a prompt for question answering. This code defines the custom context (embeddings from the vector store) and the questions asked to create a prompt for question answering for the PaLM2 text-bison model.

prompt = f""" Answer the question as precisely as possible using the provided context. \n\n

Context: \n {context}?\n

Question: \n {question} \n

Answer:

"""

# Call the PaLM API on the prompt.

print("PaLM Predicted:", text_generation_model_with_backoff(prompt=prompt), "\n\n")

The response you get from the PaLM APIs will contain the answer to your question.

Conclusion

Congrats! If you’ve reached the end of this post, we hope that you now understand how to use Document AI and PaLM2 for question answering. You should now know how to:

- Extract text from PDF documents using a Document AI OCR processor.

- Use the textembedding-gecko model to generate embeddings for the extracted text.

- Use the PaLM text-bison model to answer questions on the embeddings data store. Please feel free to explore the solution and contribute to the code in the Github repository.

We hope this post showed you how easy it is to build a serverless, RAG-based architecture with Document AI and large language models. You may also consider checking other Google Cloud products which may better suit your needs like:

- Document AI Custom Extractor to extract specific fields from documents like contracts, invoices, W2s & bills of lading.

- Document AI Summarizer to customize summaries based on your preferences for length and format with no training required. It can provide summaries for documents up to 250 pages long, and you won’t need to manage document chunks or model context windows.

- Use Vertex AI Search and Conversation, an enterprise end-to-end RAG solution, to not only search and summarize against digital pdfs, html and txt documents, but also, to build a chat bot on top of those documents.

Google can’t wait to see how you use it to transform workflows in your organization!