Article

OmniHuman 1.5: Evolving Digital Avatars from Reaction to Cognition

OmniHuman Today

Enterprises are already experimenting with the first generation of our avatar technology — OmniHuman-1.0.

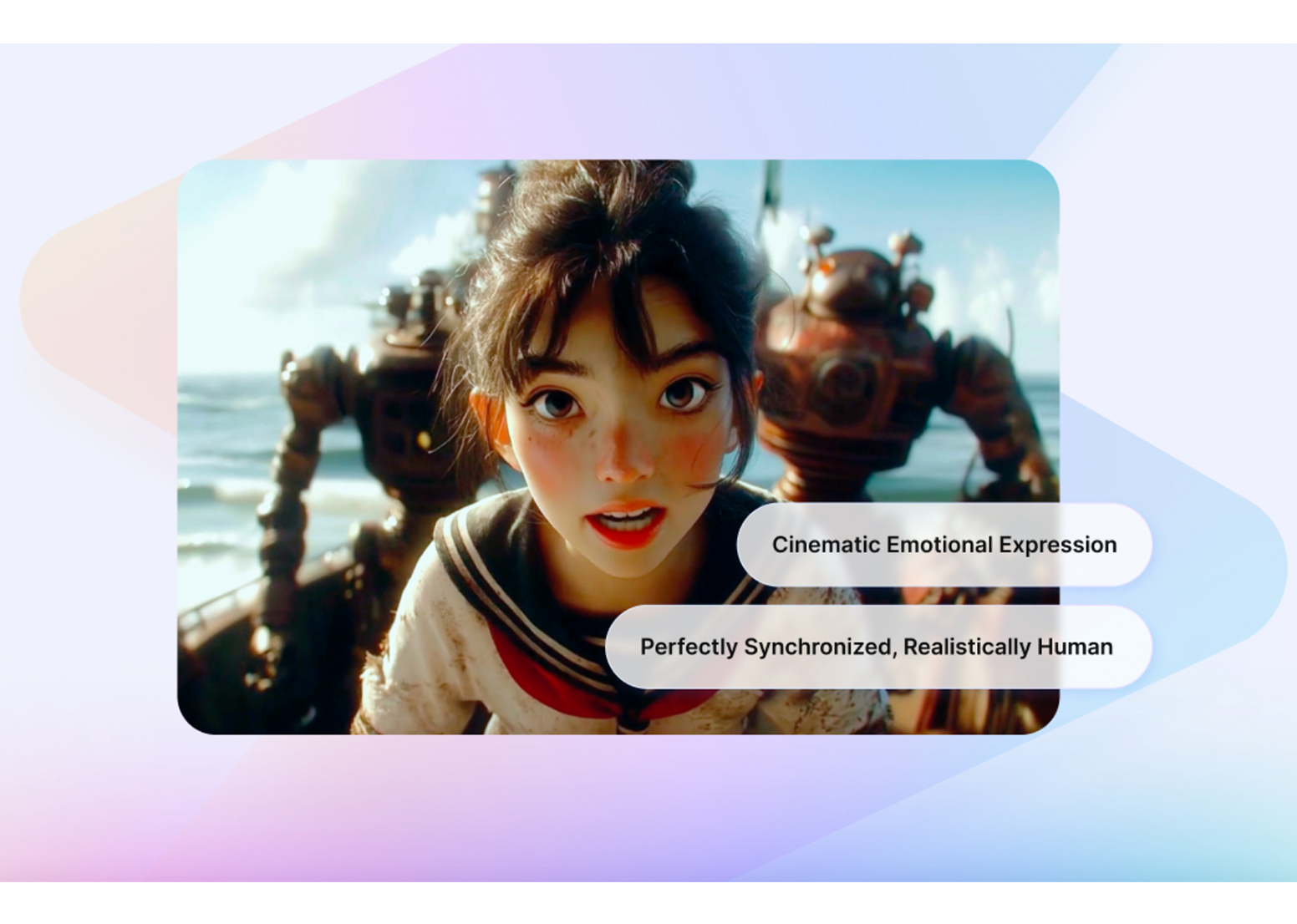

These avatars synchronize lip movements and gestures with audio, forming a reliable foundation for content creation, ecommerce, marketing, animation and game development.

But this is only the beginning. At ByteDance, their research teams continually push the boundaries of AI to create technology that doesn’t just react, but also reasons.

Looking Ahead: OmniHuman-1.5

ByteDance R&D will introduce OmniHuman-1.5, the next chapter in cognitive avatars. Unlike traditional systems that simply mirror sound, OmniHuman-1.5 incorporates reasoning and context, bringing avatars closer than ever to human-like understanding.

Here are the Top 4 Breakthroughs shaping OmniHuman-1.5:

- Avatars with a Mind: System 1 + System 2 Cognition

A dual-system framework inspired by human thinking:

- System 1: fast, instinctive reactions (lip-sync, gestures).

- System 2: slower, deliberate reasoning (context-aware actions).

Why it matters: Avatars no longer just react; they understand.

- Reflection for Long-Form Coherence

A built-in reflection mechanism allows avatars to “re-plan” their actions mid-sequence, preventing repetitive or illogical behavior.

Why it matters: Enables sustained, natural conversations — critical for livestreaming and ecommerce.

- Multi-Person & Multi-Entity Capability

Supports multiple avatars in the same scene with coordinated dialogue and turn-taking. Works not only with humans but also with mascots, anime, and stylized characters.

Why it matters: Expands use cases into retail mascots, storytelling, and role-play simulations.

- Symmetric Multimodal Fusion

Balances audio, text, and visual cues equally, rather than relying mainly on audio.

Why it matters: Avatars don’t just match rhythm — they capture intent.

Unlocking New Possibilities for Enterprises

While OmniHuman-1.5 represents the next leap forward, enterprises can benefit today from OmniHuman-1.0 through BytePlus. Current applications include:

- E-commerce & Livestreaming: Avatars demonstrating products or acting as brand mascots, including stylized or animated figures. Perfect for product demos, livestream interactions, and short-form shopping clips, these avatars help retailers engage audiences with immersive, on-brand experiences.

- Advertising & Brand Marketing: Empowers brands to prototype and launch expressive avatar-led content — from memorable storytelling and creative product placements to culturally adaptive campaigns. These short, hyper-real sequences strengthen audience connection and make campaigns more dynamic across markets and formats.

- Animation & Game Development: Animators and game developers can generate lifelike character performances — from expressive dialogue clips and cinematic reactions to full-body gestures. Ideal for rapid prototyping and creative iteration, these sequences reduce reliance on motion capture and unlock richer, more immersive worlds

The Road Ahead

OmniHuman is the foundation for a new era of enterprise applications — from communication and commerce to creativity.

Related News

Customer Care portfolio: Flexible, scalable, robust support

See Detail

Introducing Workload Manager: Maximize reliability and performance by automating best practices

See Detail

Delivering greater satisfaction, success, and social solutions with Google Maps Platform

See Detail